In the first two posts in this series, I explored why it may be useful to think of agentics as a way of studying AI agents more systematically. The first post asked why we might need a science of agents at all. The second looked across disciplines to ask what different fields mean by “agent,” and proposed a working synthesis:

An agent is an entity capable of selecting and performing actions in pursuit of objectives, within an environment, under constraints.

In this post, we double down on a more practical question:

What makes an agent more or less of an agent?

In current discussions of agentic AI (and AI agents), several concepts are often blended together: agency, autonomy, intelligence, objectives, environment, governance, identity, tools, skills, memory, planning, and workflows.

My goal here is not to produce a new theory, but to build a working vocabulary for agentics that allows researchers and practitioners to discuss agents without talking past each other.

Start with the Agent as a System

For the purposes of agentics, I find it useful to treat an agent as a system, not just a model.

In modern AI systems, that system may include:

- a model or policy core,

- prompts or instructions,

- tools and APIs,

- memory or state,

- permissions and guardrails,

- and some form of orchestration.

This matters because many current AI agents are not just LLMs. They are compositions of models, tools, state, and control logic. The “agent” is the whole action-producing system.

This framing is consistent with much current AI-agent engineering. OpenAI, for example, describes agents as systems built from composable primitives such as models, tools, state/memory, and orchestration.

That does not mean everyone draws the boundary this way. In reinforcement learning, “agent” may refer primarily to the learned policy. In some LLM discussions, “agent” may refer mainly to the model plus prompt, with tools and orchestration treated as external scaffolding. Those are valid modeling choices.

But if we are trying to understand deployed agentic systems, it is usually more useful to treat the agent as the full system that perceives, decides, and acts.

Three Primary Axes of Agentic Capability

Once we treat an agent as a system, we can ask what capabilities make the system more – or less – agentic.

I find three primary axes useful:

- Agency — what the system can do

- Autonomy — how independently it chooses what to do

- Intelligence — how well it chooses what to do

These are deeply related in practice, but they are not the same thing.

Agency: What Can It Do?

I use agency to mean the system’s capacity for action in its environment.

More concretely:

Agency is the range of actions available to a system, together with its ability to execute them.

A thermostat has limited agency: it can turn heating or cooling on and off.

An RPA bot has broader agency within a software environment: it may click, copy, paste, reconcile records, submit forms, or generate reports.

An LLM-based agent with tools may have broader agency still: it can search, retrieve, write, analyze, call APIs, update records, or trigger workflows.

Agency is shaped by:

- tool access,

- permissions,

- interfaces,

- embodiment,

- APIs,

- actuators,

- and the environment in which the system operates.

This means agency is not located only “inside” the agent. It is partly determined by what the environment affords and what the agent is permitted to do.

A useful shorthand:

Agency is action capacity.

Autonomy: Who Controls the Next Step?

Autonomy is one of the most overloaded terms in discussions of agents.

Sometimes people use autonomy to mean that a system can run unattended. But that is only one kind of autonomy.

I find it useful to separate execution autonomy from decisional autonomy.

Execution Autonomy

Execution autonomy is the ability to operate without continuous human supervision once triggered.

A thermostat has execution autonomy.

An RPA bot may have execution autonomy.

A scheduled data pipeline has execution autonomy.

But none of these necessarily “figures out” what to do in any rich sense. They may run independently while still following a fixed policy or script.

Execution autonomy is therefore about operation without supervision.

Decisional Autonomy

Decisional autonomy is the degree to which the system determines its own actions, either locally within a step or globally over the control flow.

This is closer to what people usually mean when they describe an AI system as “agentic.”

But decisional autonomy also comes in levels.

Local Decisional Autonomy

A system may have local decisional autonomy within a predefined step.

For example, a scripted workflow might include an LLM step such as:

“Summarize this document.”

The workflow determines that summarization should happen at this point. But the LLM still has freedom to decide what is salient, how to structure the summary, what wording to use, and how to resolve ambiguities.

So a scripted workflow with an LLM step has more decisional autonomy than a fully deterministic workflow, even if the overall sequence of steps is fixed.

Orchestration Autonomy

A stronger form is orchestration autonomy.

Orchestration autonomy is the degree to which the system can decide, revise, or control the sequence of steps at runtime.

This includes deciding:

- what to do next,

- which tool to use,

- whether to ask for clarification,

- whether to retry,

- whether to revise a plan,

- when to escalate,

- and when to stop.

The important distinction is not whether the system has an orchestrator. The orchestrator may be part of the agent. The deeper question is:

Is the orchestrator’s control logic mostly designer-specified, or dynamically determined by the agent at runtime?

In other words, is the agent following scripted orchestration, or does it have some degree of self-directed orchestration?

This maps closely to Anthropic’s distinction between workflows and agents. In Anthropic’s framing, workflows are systems where LLMs and tools are orchestrated through predefined code paths, while agents are systems where LLMs dynamically direct their own processes and tool use.

That is primarily a distinction about orchestration autonomy.

So the autonomy spectrum looks something like this:

- Deterministic script: little decisional autonomy

- Scripted workflow with LLM steps: some local decisional autonomy

- LLM workflow with branching: moderate decisional autonomy

- Objective-driven LLM agent: stronger orchestration autonomy

- Long-running governed agent: potentially high autonomy, bounded by governance

A useful shorthand:

Execution autonomy is operating without supervision. Local decisional autonomy is freedom within a step. Orchestration autonomy is freedom over what step comes next.

Or more simply:

Autonomy is locus and degree of control.

Intelligence: How Well Does It Choose?

If agency is about what actions are possible, and autonomy is about who controls action selection, then intelligence is about the quality of that selection.

Intelligence is the ability to select effective actions from the available action space, especially under uncertainty and constraints.

This can include:

- reasoning,

- planning,

- learning,

- generalization,

- error recovery,

- uncertainty estimation,

- self-correction,

- and knowing when to ask for help.

This is why decisional autonomy and intelligence often feel entangled. We tend to give systems more autonomy when we believe they are intelligent enough to use it well.

But the distinction still matters.

A system can have high autonomy and low intelligence: an unattended bot running brittle rules.

A system can have high intelligence and low autonomy: a powerful LLM embedded in a fixed workflow where it only answers predefined subtasks.

Another way to put it:

Intelligence is quality of choice.

Objectives and Roles: What Organizes Agentic Behavior?

Agency, autonomy, and intelligence describe what an agentic system is capable of doing. But they do not, by themselves, explain what the system is trying to accomplish.

That is the role of objectives.

An objective is not a capability axis in the same way that agency, autonomy, or intelligence are. Instead, it provides the directional structure that makes behavior intelligible as agentic. It answers the question:

What is the agent trying to bring about?

Objectives can vary in:

- specificity,

- time horizon,

- ambiguity,

- openness,

- stability,

- and authority.

A narrow objective such as “click this button” may require little intelligence or autonomy. A broad objective such as “improve customer satisfaction” requires interpretation, planning, adaptation, and judgment. In general, the more open-ended the objective, the more intelligence and decisional autonomy are needed to pursue it effectively.

It is useful to distinguish between two levels of objective structure.

Standing Role or Constitutive Objective

An agent may have a standing role or constitutive objective supplied by its design. This is akin to a system prompt, role specification, product requirement, reward function, or policy configuration.

Examples include:

- “You are a research assistant.”

- “You are a customer-support agent.”

- “You are a procurement agent operating under company policy.”

- “You are a coding assistant.”

- “Optimize for safe, helpful, and honest behavior.”

This level defines the agent’s role, norms, and persistent purpose.

Task-Level Objective

The agent may also receive concrete task-level objectives from inputs, events, prompts, triggers, workflows, monitoring systems, or other agents.

Examples include:

- “Summarize this article.”

- “Resolve this ticket.”

- “Compare these vendors.”

- “Draft this memo.”

- “Respond to this monitoring alert.”

- “Rebalance this portfolio under these constraints.”

A user request is one possible form of tasking, but not the only one. Many agents may not have a “user” in the conversational sense at all. Their inputs may come from queues, APIs, sensors, logs, scheduled jobs, market signals, or messages from other agents.

The standing role shapes how the task-level objective is interpreted. The same instruction — “find the best vendor” — will be interpreted differently by a cost-optimization agent, a compliance-constrained procurement agent, or a sustainability-focused agent.

So objectives provide direction, while the agent’s capabilities determine how that direction is pursued:

Objectives orient behavior; agency provides action capacity; autonomy determines control over action selection; intelligence determines the quality of action selection.

The Environment: What the Agent Acts Within

Agents do not act in a vacuum. The environment provides the world in which agency becomes meaningful.

The environment provides:

- state — what can be sensed or inferred,

- dynamics — how the world changes,

- feedback — success, failure, reward, correction,

- constraints — rules, resources, latency, permissions,

- other actors — humans, agents, organizations, markets,

- affordances — the actions that are actually possible.

In physical robotics, the environment includes the physical world.

In digital agents, it may include APIs, databases, documents, enterprise systems, queues, event streams, monitoring systems, communication channels, users, workflows, and even other agents.

This is why the classical agent loop remains so useful:

environment → percepts → agent system → actions → environment

The loop matters because actions change the world, and the changed world changes future percepts.

The environment therefore shapes agency directly. A model with no tools has little capacity to act. The same model embedded in a tool-rich, permissioned environment has much greater agency.

Governance: What Bounds the Agent?

If objectives provide direction and the environment provides affordances, governance provides the envelope within which the agent is allowed to operate.

Governance includes the rules, permissions, oversight mechanisms, and accountability structures that constrain what an agent may do.

It may come from the designer, the deployer, the organization, platform policy, regulation, safety architecture, runtime supervision, or human review.

Governance includes:

- permissions,

- access control,

- safety rules,

- escalation paths,

- approval gates,

- monitoring,

- logging,

- auditing,

- rate limits,

- interruptibility,

- and accountability structures.

This is why “agent as system” is useful. Governance rarely lives only inside the model. It is distributed across the architecture, the tasking interface, the environment, and the governance layer that surrounds the agent.

A useful shorthand:

Governance bounds agency.

Identity: Is It the Same Actor Over Time?

There is another concept worth naming, though I would not place it at the same level as agency, autonomy, and intelligence: identity.

I treat agency, autonomy, and intelligence as the primary axes of agentic capability. Identity is important, but in a different way.

Identity is the continuity of an agent as the same operational actor over time, with stable boundaries, permissions, memory surfaces, responsibilities, and auditability.

Identity is related to memory, but it is not the same thing.

Memory is retained information: prior interactions, learned preferences, intermediate state, historical outcomes, or accumulated experience. Memory improves intelligence by giving the agent context for future action.

Identity is the continuity of the actor to which that memory, authority, permissions, and accountability attach. It lets us say, “this is the same agent acting again,” not merely “this system has access to some stored information.”

A one-shot LLM call may be highly intelligent but have weak identity: it can reason well in the moment, but it is not necessarily treated as the same actor across time. An RPA bot may have limited intelligence but strong identity: it runs under a service account, has permissions, generates logs, and performs a recurring operational role. A long-running enterprise agent may need both: memory to improve its behavior and identity to support continuity, governance, and accountability.

Identity becomes especially important when agents operate over long horizons, across tools, or inside organizations. It allows permissions, audit trails, escalation paths, accumulated context, and accountability to attach to an agent as an ongoing entity.

So I would treat identity as a secondary system-level property: not what makes an agent agentic in the first place, but crucial once agents become persistent, governed, or organizational actors.

In sum:

Memory supports continuity of context; identity supports continuity of actorhood. Together, they support long-horizon intelligence and governance.

Translating Common AI-Agent Terms

Current discussions of AI agents use a vocabulary that is more engineering-oriented than theoretical: tools, skills, memory, planning, orchestration, workflows, guardrails, and context. These terms fit naturally into the framework above.

They are not separate theories of agency. They are implementation concepts that map onto the broader dimensions of agency, autonomy, intelligence, environment, governance, and identity.

Tools

Tools are external capabilities the agent can invoke.

More precisely, tools are actionable interfaces to the agent’s operational environment: APIs, databases, file systems, browsers, code interpreters, calculators, communication channels, enterprise systems, sensors, or actuators through which the agent can sense, compute, retrieve, or act.

In this framework, tools primarily expand agency. They increase what the agent can do.

A model with no tools may only produce text. The same model with access to tools can retrieve information, calculate, write files, update records, send messages, operate software, or trigger workflows.

But access to tools is not the same as unrestricted agency. Tool use is usually shaped by governance: permissions, policies, rate limits, approval gates, logging, audit requirements, and safety constraints.

So the role of tools cuts across the framework:

- Environment provides the tools or systems to act upon.

- Governance determines which tools the agent may use, under what conditions.

- Agency expands when the agent can invoke more tools or more powerful tools.

- Autonomy determines whether the agent can choose tools dynamically or only use them when a workflow specifies.

- Intelligence determines how well the agent selects and uses those tools.

In a nutshell:

Tools are environment affordances made available to the agent through governed interfaces.

Skills

Skills are reusable capabilities or procedural know-how that help an agent perform a class of tasks. A skill might include instructions, domain knowledge, scripts, examples, resources, or tool-use routines.

Skills primarily shape intelligence: they improve how well the agent selects and executes actions in a particular domain. They may also expand agency if they package access to new tools or workflows.

This is why “skills” are interesting: they sit between raw model intelligence and full agent autonomy. They give an agent structured competence without necessarily creating a separate agent.

Memory and State

Memory and state allow the agent to carry information across steps or interactions.

They support intelligence by improving context, continuity, learning, and planning. They may also support identity when memory is attached to a stable operational actor over time.

As previously discussed, memory and identity are not the same. Memory helps the agent choose better. Identity allows the system to be treated as the same actor across time.

Planning

Planning is a cognitive or decision process. It produces or revises a sequence of intended actions.

It answers:

What steps should be taken to pursue the objective?

Planning includes:

- decomposing an objective into subtasks,

- reasoning over alternative paths,

- sequencing actions,

- adapting the plan when new information arrives,

- deciding when enough has been done.

In our framework, planning primarily belongs under intelligence, because it improves the quality of action selection over time. It can also increase apparent autonomy when the agent is allowed to generate or revise its own plan at runtime. But the core of planning is not control over execution; it is reasoning about what should be done.

Orchestration and Workflows

Orchestration is the control structure that coordinates models, tools, memory, skills, and execution steps.

It answers:

How are the components invoked, sequenced, routed, monitored, retried, and stopped?

Orchestration includes:

- calling the model,

- invoking tools,

- routing between steps,

- managing state,

- enforcing loops,

- handling retries,

- applying guardrails,

- determining when to stop,

- coordinating multiple agents or skills.

In this framework, orchestration primarily relates to autonomy, because the key question is whether the control flow is mostly scripted by the designer or dynamically determined by the agent at runtime.

A workflow is a structured form of orchestration: a sequence or graph of steps, often with predefined control paths. Workflows can include AI models, tools, memory, and guardrails, but the order and conditions of execution are usually specified by the designer.

This is where Anthropic’s workflow-versus-agent distinction fits. In Anthropic’s framing, a workflow has predefined control paths, while an agent has more runtime control over what to do next.

So a workflow can be agentic in some respects — it may use tools, maintain state, pursue objectives, and incorporate intelligence — without being an “agent” in the stronger sense used by many practitioners. Its agency may be real, but its decisional autonomy is usually more limited.

A useful shorthand:

A workflow is scripted orchestration; an agentic loop is more self-directed orchestration.

Guardrails

Guardrails are constraints on what the agent may do or say.

They belong primarily to governance. They bound agency, shape autonomy, and sometimes compensate for imperfect intelligence.

Context

Context is the information made available to the agent at decision time: instructions, retrieved documents, tool results, conversation history, environment state, policy, and memory.

Context supports intelligence, because it improves the quality of action selection. It can also shape objectives and governance when it includes task instructions or policy constraints.

RPA as a Boundary Case

Robotic Process Automation is useful because it complicates the story.

An RPA bot can:

- act in a software environment,

- run unattended,

- execute tasks over time,

- and operate under a persistent identity.

So is it an agent?

In the classical AI sense, yes. It perceives a software environment and acts upon it.

But it usually has:

- narrow agency,

- high execution autonomy,

- low decisional autonomy,

- low intelligence,

- strong identity.

This makes RPA a boundary case. It shows that enterprise systems had agent-like properties before LLMs. What LLMs add is not agency from nothing, but richer intelligence and more flexible decisional autonomy.

Where Current Agentic AI Fits

Much of today’s agentic AI discourse conflates multiple concepts that are worth separating.

When a system is called “agentic,” it may mean that:

- it has access to tools,

- it can run across multiple steps,

- it can choose which tool to use,

- it can maintain state,

- it can pursue an objective,

- it can operate with limited supervision,

- or it can adapt based on feedback.

OpenAI’s practical guide similarly frames agents as systems that execute workflows end-to-end, and emphasizes models, tools, instructions, orchestration, and guardrails as core design components.

We now see distinctions being drawn between “agentic AI” and “AI agents” — a distinction that can seem puzzling if we look only at the ordinary semantics of those phrases. But in practice, people use these terms to emphasize different properties.

The framework described here helps us ask more precise questions:

- What is the system’s action space?

- How much of the control flow is scripted versus self-directed?

- How competent is its action selection?

- What environment does it act within?

- What objective is orienting its behavior?

- What governance constrains it?

- Does it operate under a stable identity?

These questions are more useful than trying to determine, in the abstract, whether a system “is agentic.”

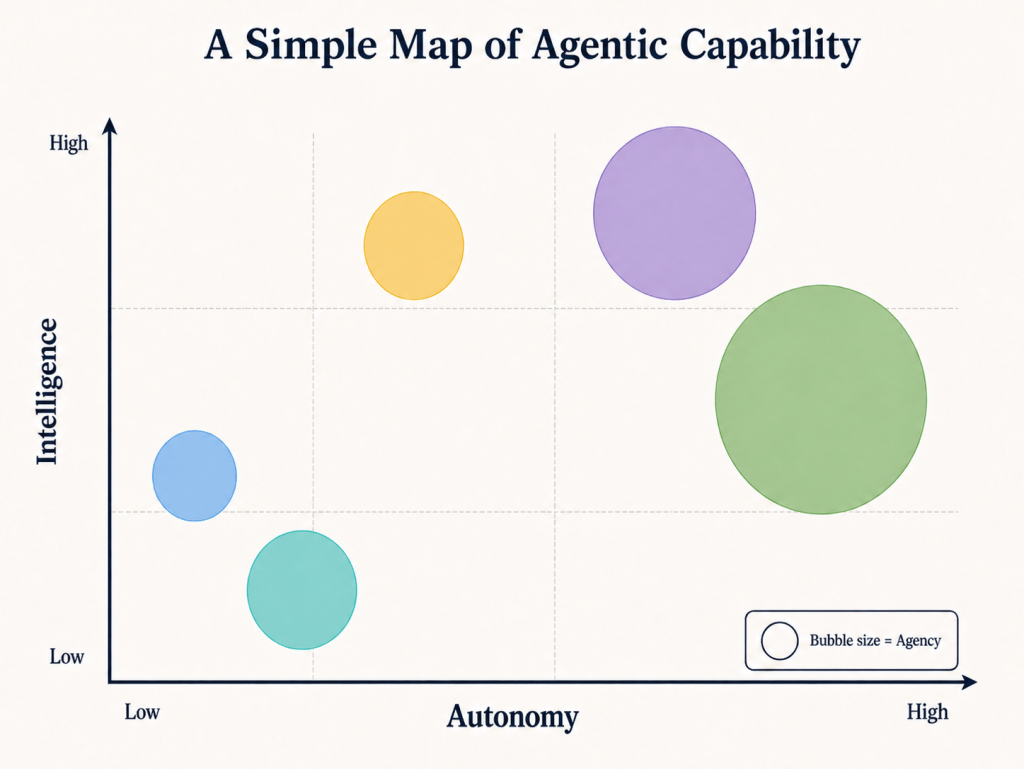

A Simple Map

One way to visualize the core capability axes is:

- X-axis: autonomy

- Y-axis: intelligence

- Bubble size: agency

Then we can place different systems in this space:

- Scripted workflow: low autonomy, variable intelligence, narrow-to-moderate agency

- RPA bot: high execution autonomy, low decisional autonomy, low intelligence, moderate agency

- LLM workflow: high intelligence, lower decisional autonomy, moderate agency

- Objective-driven LLM agent: higher decisional autonomy, high intelligence, broader agency

- Long-running enterprise agent: governed autonomy, variable intelligence, broad agency, strong identity

This is not an attempt to rank systems. It is a way to clarify the kind of agentic capability each system exhibits.

Limitations and Extensions

This framework is pragmatic. It fits much of today’s agentic AI discourse, but it is not universal. A few perspectives stretch it.

Ascribed Agency

Sometimes agency is not only a mechanism but also an attribution. Humans may treat a system as agentic because its behavior appears coherent, intentional, or socially meaningful.

Collective Agency

A multi-agent system, organization, or agentic workflow may exhibit agency at the collective level. Sometimes this agency is emergent: coherent behavior arises from the interactions of individual agents without any single agent or controller directing the whole.

But collective agency does not have to be purely emergent. In many systems, it is explicitly orchestrated. A coordinating layer may assign roles, route tasks, enforce protocols, manage dependencies, and determine when the collective has achieved its objective. In such cases, system-level agency is partly an architectural property, not merely an emergent phenomenon.

Operationally, we often treat the collective as one actor: a team, organization, swarm, marketplace, or multi-agent system that pursues objectives and produces outcomes.

Mechanistically, the collective may be made of many interacting parts; operationally, we often treat it as one acting system.

Impact and Power

In safety and governance discussions, the most important question may not be how agentic a system is, but how much change it can cause.

Two systems with similar intelligence and autonomy may differ dramatically in impact depending on their permissions, resources, and placement in real workflows.

These extensions do not undermine the core framework. They remind us that “agent” is both a technical and social construct.

Summary

For now, I find the following distinctions useful as I try to make sense of where agentic AI is going:

- Objectives: What organizes the agent’s behavior?

- Agency: What can it do?

- Autonomy: Who or what controls what it does next?

- Intelligence: How well does it choose?

- Environment: What world does it act within?

- Governance: What rules, permissions, and oversight constrain it?

- Identity: Is it treated as the same operational actor over time?

The primary axes of agentic capability are agency, autonomy, and intelligence. Objectives orient those capabilities. The environment affords and constrains them. Governance bounds them. Identity matters when agents become long-running, auditable, or organizational actors.

This does not settle the definition of an agent. But it gives us a way to compare systems without collapsing everything into vague phrases such as “agentic AI” or “AI agent.”

That, to me, is the point of agentics: to treat AI agents as objects of scientific inquiry, not just engineering artifacts or product features. A scientific field begins by making distinctions visible — clarifying terms, separating concepts, comparing cases, and refining vocabulary as evidence and practice evolve. The vocabulary proposed here is therefore not meant to be final. It is a starting point for a more systematic inquiry into what agents are, how they differ, and how they behave as AI technology advances and new use cases emerge.

-SriG

Leave a comment